The New Workforce Intelligence Standard: What the Mobley Ruling, the EU AI Act, and Board Succession Reviews Now Require

AI Hiring Bias Accountability: What Happens When AI Gets It Wrong

The HR tech industry has a contradiction it needs to answer for. These are the companies whose entire market value is built on helping other organizations attract, develop, and retain human talent. They sell workforce intelligence. They advise CHROs on engagement, retention, and the future of work. In the first quarter of 2026, they are laying off their own people and crediting artificial intelligence as the reason. This is occurring at the same moment that the question of AI hiring bias accountability is no longer theoretical, but a federal-court matter with named plaintiffs and a certified class.

UKG cut 950 employees in April and cited “changes in technology driven by AI” as the rationale. The cut lands inside a broader pattern: across the tech sector in Q1 2026, more than 60% of announced layoffs explicitly named AI as a factor — up from 38% the prior year. That is not a company story. That is a market posture. And the industry watching those cuts is the same industry being asked to trust these vendors with their most sensitive organizational decisions.

The irony would be easier to absorb if the technology performing the cuts were proven, audited, and accountable. It is not.

The Tax Nobody Budgeted For

Here is what is not showing up on the productivity dashboards: the hours employees spend cleaning up after AI. Employees across industries are naming a hidden cost in the hours spent checking, correcting, and explaining around required AI outputs. Exit interviews are surfacing it. Engagement surveys are capturing it. Workers have a name for it now. They are calling it the AI tax. This framing matters because it signals the beginning of a formal accountability chain. The “AI tax” framing is entering manager vocabulary, which means it will start appearing in formal grievances and arbitration within 12 to 18 months. What began as hallway frustration is becoming documentation. And documentation becomes discovery.

The organizations that built their business case on gross AI productivity gains without measuring the drag on the other side of the ledger are now sitting on an exposure they did not price in. Companies that staked internal and external credibility on AI productivity claims now face a workforce building an evidence-based counter-case. That counter-case is not theoretical. It is being filed in federal court.

The Legal Frame Just Changed

In May 2025, the Mobley v. Workday case cleared the collective-action threshold under the ADEA. By March 2026, the case had survived a second motion to dismiss and an amended complaint reasserting state-law and disability claims — a pattern of legal momentum that signals the federal precedent is hardening, not retreating.” This is not a regulatory notice or a pending rule. A federal court determined that a class of plaintiffs harmed by AI-driven hiring decisions has standing to pursue collective action against the platform that made those decisions. The case advanced under the Age Discrimination in Employment Act, which means the harm alleged is not hypothetical disparate impact. It is discriminatory outcome, at scale, attributed to an automated system.

The Question without an Answer

What happens when AI gets it wrong? Not in the abstract. Specifically, who:

- is accountable when an AI-assisted hiring decision screens out a qualified candidate based on a protected characteristic?

- owns the outcome when an AI performance management tool flags an employee for review based on inputs the employee never saw and cannot contest?

- explains the decision when there is no human in the loop?

The dominant industry posture has been to defer the question. That posture is becoming a liability in the same quarter it was standard operating procedure. The organizations that come through this transition with their credibility intact will answer the question before a courtroom or a congressional hearing forces them to. They will publish an accountability framework before regulators require one. We must now disclose how bias audits work, who reviews automated decisions, and what the appeals process looks like for someone who believes a machine got them wrong.

It means treating AI governance as a system problem, not just a compliance problem. The HR tech industry convinced the market that it understood the human side of technology better than anyone else. That argument now requires proof. The workers losing their jobs to fund AI investments, the employees logging invisible hours auditing outputs they did not ask for, and the plaintiffs asking a federal court to hold an algorithm accountable are all making the same basic claim: the humans in this equation still matter.

The question is whether the industry that built its business on that premise still believes it. The confidence data and the floor-level backlash do not describe the same reality.

So What Does “Getting It Right” Look Like?

The accountability gap described above is not hypothetical. It has a shape. And it maps almost exactly to what happens when organizations treat skills data as a reporting problem rather than a decision infrastructure problem. Most enterprises already have the data. What they are missing is trust in what that data means. Skills are self-reported or inconsistently validated. Job profiles drift by manager and region. Learning activity drifts from role requirements. When someone challenges a decision, internally or in a courtroom, the organization cannot reconstruct a coherent story: what evidence led to what outcome?

That is not an AI problem. That is a governance hole that AI tears wider. The question organizations need to answer before the next round of workforce decisions is a simple one. Can we explain, to a regulator, a works council, or a plaintiff’s attorney, how readiness was assessed, what evidence supported that assessment, and who was accountable for the outcome?

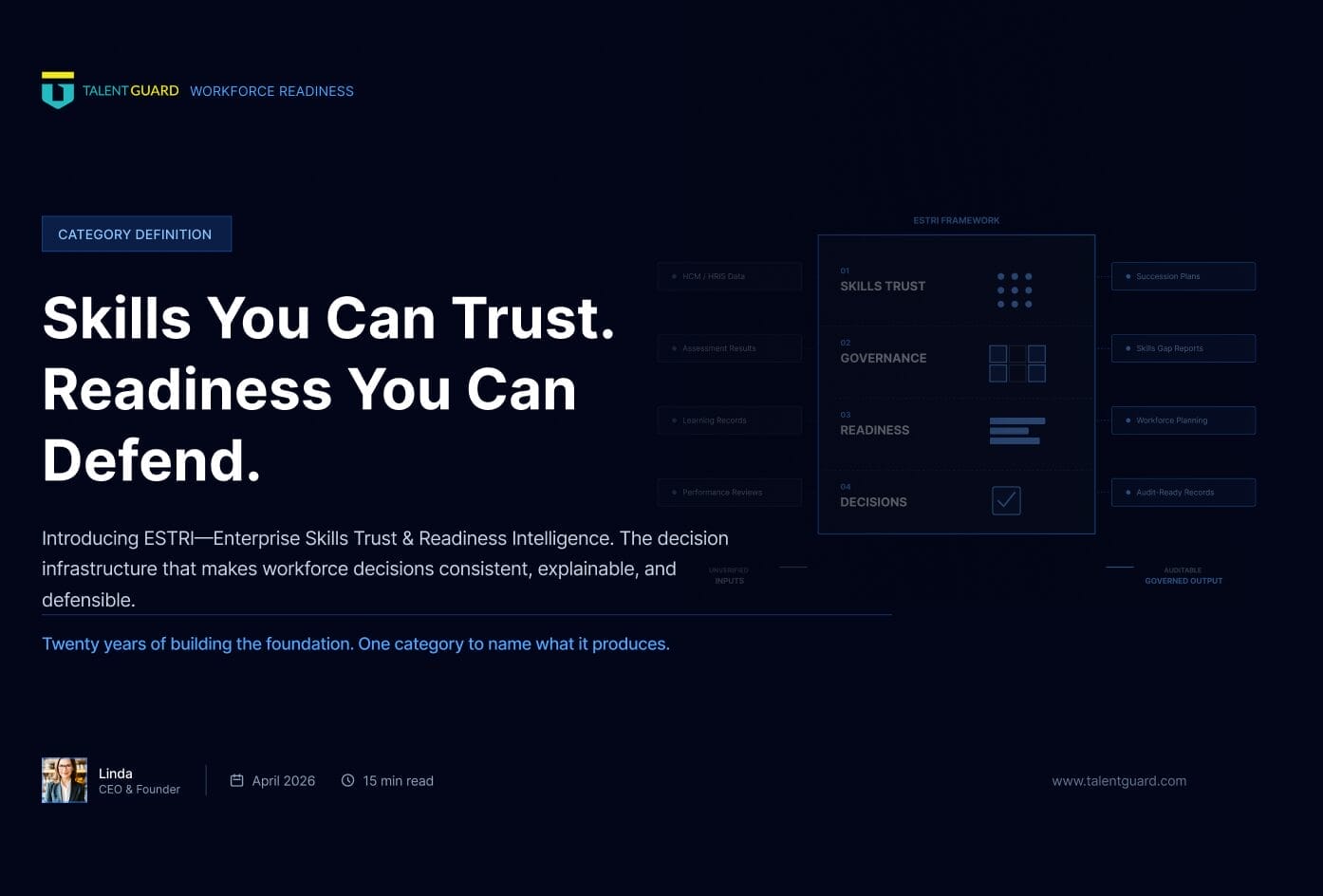

If the answer is not an immediate yes, the window to build that capability is now, not after the first formal challenge arrives. TalentGuard built Enterprise Skills Trust and Readiness Intelligence (ESTRI) specifically for this problem. ESTRI is not a dashboard. It is not an AI matching engine. It is decision infrastructure. A governed, explainable layer that turns fragmented skill signals into auditable readiness assessments that organizations can act on and defend.

If the issues raised here are raising questions your organization has not yet answered, that is the right starting point for a conversation. See how TalentGuard approaches decision-grade workforce intelligence.

About TalentGuard

TalentGuard powers Enterprise Skill Trust & Readiness Intelligence—so organizations can make talent decisions that are consistent, scalable, and defensible. We turn fragmented skills signals into a governed foundation: role-based standards, proficiency expectations, evidence and provenance, and a complete change history. On top of that foundation, TalentGuard delivers explainable role readiness and gap insights—then connects action loops (development, mobility, performance, succession, and certifications) to measurable progress. The result: a trusted system of record for role and skills data that supports audit-ready reporting, stronger workforce planning, and better outcomes across the talent lifecycle. Request a demo to see how TalentGuard helps you establish Skill Trust and operationalize readiness intelligence across your enterprise.

See a preview of TalentGuard’s platform

The New Workforce Intelligence Standard: What the Mobley Ruling, the EU AI Act, and Board Succession Reviews Now Require

Something quiet has happened in the last eighteen months, and most workforce intelligence leaders haven’t named it yet. A federal court, a regulatory body, and a corporate governance committee — three institutions that rarely share a vocabulary, much less a question — have each independently started asking workforce systems to produce the same thing. Not […]

Skills You Can Trust. Readiness You Can Defend. Introducing ESTRI.

Twenty years of building the foundation. One category to name what it produces. CORE INSIGHT The gap was not visibility. Every organization had dashboards. The gap was trust. In my last post, I shared the story of how TalentGuard got here. The checkbox era. The career ladder with no data behind it. The deployments that […]

The Confidence that Precedes the Hardest Lessons

We went underground. We stayed there until we got it right. On twenty years of building the infrastructure the workforce actually needs and what the hardest lessons taught us about what it means to finish. There is a particular kind of confidence that precedes the hardest lessons. Not arrogance. Not carelessness. Just the reasonable certainty […]