Compliance Is a Design Document. Are You Reading It That Way?

Most HR leaders approach AI regulation the same way they approach a compliance audit: head down, running a checklist of things they’re not supposed to do. When a law drops with penalties attached, the first instinct is defensive.

There’s a more useful way to read NYC Local Law 144, the EU AI Act, and the wave of state-level AI legislation advancing across the country and it’s not as a threat to your operations. It’s as a set of spec sheets.

When you flip that lens, something interesting happens. The frameworks stop looking like a patchwork of jurisdictional requirements and start reading like a coherent description of what the next generation of workforce intelligence needs to do. That description maps closely to what we built TalentGuard to deliver.

What a Spec Sheet Does

A spec sheet doesn’t tell you what you can’t build. It defines what the finished system must do in terms of functional requirements, testable outputs, and the conditions the system must meet under real operating conditions. When you read regulatory frameworks this way, they don’t constrain your architecture. They define it.

NYC Local Law 144

NYC Local Law 144 requires organizations to conduct an annual independent bias audit for any automated employment decision tool used in hiring or promotion decisions within New York City.

Read it as a threat: audit or face penalties.

Read it as a spec:

- Produce decision outputs that an independent party can audit at the end of each year.

- Structure the data to make the decision logic traceable.

- Reproduce outcomes on demand.

If your system can’t do those three things, the audit isn’t the problem. Your architecture is. The same law requires employers to notify candidates before they apply an automated decision tool to an evaluation. They system needs to know which tools you’re applying to which decisions and surface that information on demand. That’s a system capability. It requires a tool that can account for its own use.

TalentGuard’s skills intelligence platform operates on structured, documented competency frameworks. We don’t rely on implicit or manager-dependent criteria. We define them, standardize them, and make them retrievable, because auditability depends on that foundation.

The EU AI Act

The EU classifies AI that organizations use in employment, work management, and access to self-employment as high-risk. Regulators require high-risk systems to document their training data and support auditability before deployment.

Read it as a threat: a heavy compliance lift in an already complex environment.

Read it as a spec:

- Be sure to carry documented provenance for the data engineers used to train it and for every decision it produces. This “documentation” requirement is really a data lineage requirement. Every consequential output needs a traceable chain of custody, from training data to deployed model to individual decision.

When TalentGuard maps skills to roles, assessments, and development pathways, that linkage isn’t incidental. We build it into the structure of the system. The connection between input criteria and output recommendation is the product. That’s what documented provenance looks like in a workforce system.

The EEOC’s iTutorGroup Settlement

In 2023, the EEOC resolved a case against iTutorGroup, which used software that automatically rejected applications from women over 55 and men over 60.

The settlement established something critical: automated rejection based on protected characteristics constitutes discrimination, regardless of intent. The system didn’t “mean” to discriminate. It didn’t matter.

Read it as a threat: automated tools create liability.

Read it as a spec:

- Have meaningful human review before acting on any decision that could produce differential outcomes. Not a vague commitment to oversight, but as an explicit architectural requirement.

TalentGuard surfaces recommendations and competency gaps. We don’t make autonomous hiring or advancement decisions. Human managers review, interpret, and act. That’s not just product philosophy. It’s a functional requirement under the current regulatory environment.

The State-Level Layer

Colorado, Illinois, New Jersey, and more than a dozen other states continue to advance AI legislation, most of it centered on impact assessments and candidate consent. The specifics vary. The direction doesn’t. Taken together, these laws add texture to the spec: you need to generate impact assessments on demand and build consent architecture directly into the decision workflow, not bolt it on at the end as a disclosure.

What the Spec Sheet Actually Says

When you read these frameworks together, not as competing jurisdictional requirements, but as a converging design document, they produce five consistent functional requirements:

- Verified criteria: Define what a qualified candidate or employee looks like using documented, verifiable, consistently applied standards. Not implicit criteria. Not criteria that shift by manager.

- Documented application: Evidence that teams applied those criteria as intended. Most systems fail here quietly. The criteria exist, but the evidence doesn’t.

- Human-reviewed checkpoints: At every consequential decision point, a qualified person must review and validate the outcome. “Human in the loop” only matters if the loop actually changes the decision.

- Auditable output: Systems must reproduce its decisions on demand, year over year. Not reconstruct them after the fact; reproduce them. That’s a data architecture question before it’s a compliance question.

- Traceable provenance: Trace the data, the logic, and the decision. If you can’t answer “why did the system score this candidate this way,” you don’t have a compliance problem yet. You have a transparency gap that compliance will eventually expose.

The Architecture Question Is the Compliance Question

Organizations struggling with these regulations often treat them as external requirements to overlay on existing systems. But these frameworks don’t describe an overlay. They describe a foundation.

That’s not a compliance checklist. That’s the architecture of a workforce intelligence system built for an environment where regulators, candidates, and employees all scrutinize talent decisions at the same time.

We designed TalentGuard around skills intelligence, structured, transparent, and human-in-the-loop by design. That wasn’t a response to regulation. But it turns out that strong system design and emerging regulatory frameworks point to the same place. The compliance question and the architecture question are the same. Organizations that recognize this early won’t just avoid liability. They’ll build something their workforce can trust.

About TalentGuard

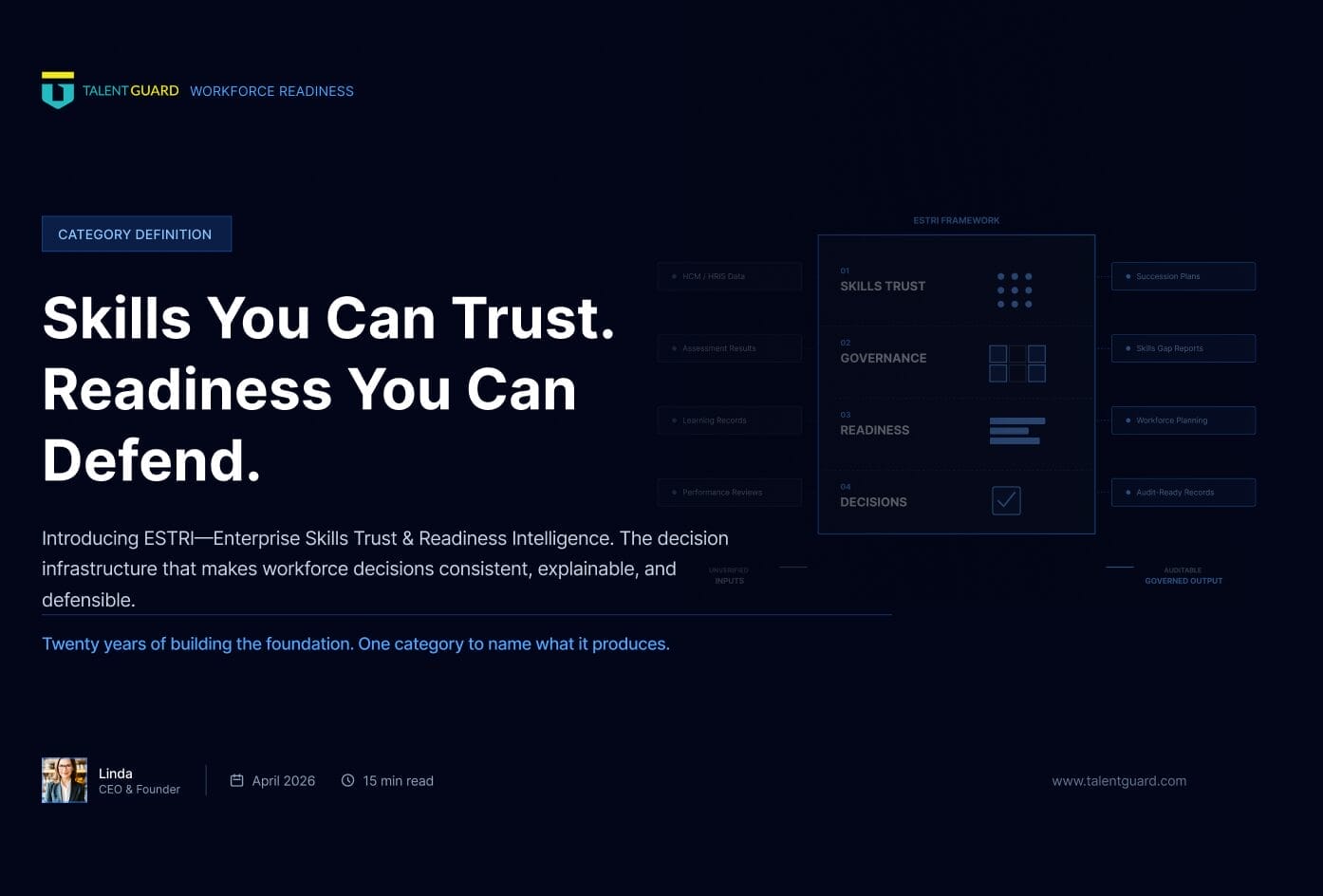

TalentGuard powers Enterprise Skill Trust & Readiness Intelligence—so organizations can make talent decisions that are consistent, scalable, and defensible. We turn fragmented skills signals into a governed foundation: role-based standards, proficiency expectations, evidence and provenance, and a complete change history. On top of that foundation, TalentGuard delivers explainable role readiness and gap insights—then connects action loops (development, mobility, performance, succession, and certifications) to measurable progress. The result: a trusted system of record for role and skills data that supports audit-ready reporting, stronger workforce planning, and better outcomes across the talent lifecycle. Request a demo to see how TalentGuard helps you establish Skill Trust and operationalize readiness intelligence across your enterprise.

See a preview of TalentGuard’s platform

AI Hiring Bias Accountability: What Happens When AI Gets It Wrong

The HR tech industry has a contradiction it needs to answer for. These are the companies whose entire market value is built on helping other organizations attract, develop, and retain human talent. They sell workforce intelligence. They advise CHROs on engagement, retention, and the future of work. In the first quarter of 2026, they are […]

The New Workforce Intelligence Standard: What the Mobley Ruling, the EU AI Act, and Board Succession Reviews Now Require

Something quiet has happened in the last eighteen months, and most workforce intelligence leaders haven’t named it yet. A federal court, a regulatory body, and a corporate governance committee — three institutions that rarely share a vocabulary, much less a question — have each independently started asking workforce systems to produce the same thing. Not […]

Skills You Can Trust. Readiness You Can Defend. Introducing ESTRI.

Twenty years of building the foundation. One category to name what it produces. CORE INSIGHT The gap was not visibility. Every organization had dashboards. The gap was trust. In my last post, I shared the story of how TalentGuard got here. The checkbox era. The career ladder with no data behind it. The deployments that […]