The New Workforce Intelligence Standard: What the Mobley Ruling, the EU AI Act, and Board Succession Reviews Now Require

Something quiet has happened in the last eighteen months, and most workforce intelligence leaders haven’t named it yet.

A federal court, a regulatory body, and a corporate governance committee — three institutions that rarely share a vocabulary, much less a question — have each independently started asking workforce systems to produce the same thing. Not a report. Not a metric. A documented, reconstructable evidence trail behind a talent decision that has already been made.

Three institutions. Three independent lines of inquiry. One shared object of scrutiny: the workforce system itself. And one shared expectation is that the system must be able to produce documented, skill-grounded, defensible evidence behind the decisions it has already helped make. That expectation is new. The systems most organizations use to make those decisions are not.

What the Mobley Ruling Established

In May 2025, Judge Rita Lin of the U.S. District Court for the Northern District of California granted preliminary certification of a nationwide collective action in Mobley v. Workday, allowing it to proceed under the Age Discrimination in Employment Act on behalf of applicants aged 40 and older who had been screened through Workday’s AI platform since September 2020. In court filings, Workday disclosed that approximately 1.1 billion applications had been rejected through its system during the relevant period.

The legal reasoning underneath the certification matters more than the scale. The court had earlier found that Workday’s system was not, in Judge Lin’s language, “simply implementing in a rote way the criteria that employers set forth.” It was “participating in the decision-making process by recommending some candidates to move forward and rejecting others.” That participation, the court held, was sufficient to establish Workday’s potential direct liability as an agent of the employers whose hiring it was influencing.

In July 2025, the court expanded the collective to include applicants processed through HiredScore AI features, a Workday acquisition, rejecting the argument that different scoring algorithms or later acquisition dates should shield that portion of the platform from the same legal logic.

The question the court is putting to every employer using AI-assisted screening tools isn’t whether discrimination was intentional. It’s a different question: what evidence do you have that this was your decision, governed by your criteria, and defensible on your terms?

Most AI-assisted talent decisions aren’t structured to answer that question.

What NYC Local Law 144 and the EU AI Act Add

Twelve months before the Mobley certification, NYC Local Law 144 entered active enforcement. Any employer using an automated employment decision tool to screen or evaluate candidates residing in New York City must now conduct an annual independent bias audit of the tool, publish the results, and notify candidates before the tool is used in their evaluation. Penalties range from $500 for a first violation to $1,500 per day for ongoing violations, with each failure to notify a candidate treated as a separate violation.

The EU AI Act has extended a structurally similar logic across every organization with European operations, classifying AI in HR as high-risk and placing documentation and audit-capability requirements on the tools used to make those decisions. Read as compliance texts, these frameworks list requirements. Read as a design specification, they describe something more specific: the functional requirements of a workforce system built for the new expectations.

An annual independent bias audit requires that decision outputs be auditable by an independent party at the end of each year. That’s a design requirement: the data must be structured, the decision logic must be traceable, the outcomes must be reproducible. Candidate notification requires that the system know which tools are being applied to which decisions, and surface that information on demand. A second design requirement.

The EU AI Act’s high-risk classification requires documented training data transparency and audit capability. A third requirement. The question these regulators are asking isn’t whether the employer means well. It’s whether the system was architected to be auditable before the audit arrives.

What Boards Are Now Asking About Succession

A third version of the same question is surfacing in Fortune 500 boardroom, and it’s the one most workforce leaders are least prepared for.

According to Challenger, Gray & Christmas data, 646 CEOs departed their roles in the first quarter of 2025. This is a record exit. External hires to S&P 500 CEO roles rose to 33% in 2025, pushing internal promotion rates below 70% for the first time in eight years, per The Conference Board and Semler Brossy research. Externally hired CEOs are paid 25–35% more than internally promoted peers.

The succession data is not, on its own, a governance story. What makes it one is the Boardspan 2025 Board Benchmark Report finding that succession planning now ranks in the bottom 5% of nearly 60 governance topics boards assess. Boards know succession matters. Most cannot produce evidence that their succession pipelines have been built on verified readiness rather than relationships and tenure.

The question directors are now putting to CHROs is no longer “who’s our successor?” It’s “what evidence do you have that this candidate is ready, and how was that assessment made?” The answer “our CEO knows she’s ready” is not an evidence trail. Neither is a name on a slide. The evidence trail is skill-verified readiness, assessed against a role standard, documented at the moment of assessment, reproducible on demand. Most succession plans aren’t structured to produce it.

Why Three Institutions Are Asking the Same Question

A court, a regulator, and a board don’t normally converge on the same inquiry. Each is accountable to a different authority, operates under a different framework, and answers to a different constituency. The convergence here is not coincidence. What has changed is not the three institutions. It’s the object of their scrutiny.

For four decades, talent decisions lived inside the organization. A manager made a call, an HR partner documented it in the system of record, and the documentation was sufficient for the scrutiny the decision was likely to face. The scrutiny, in most cases, was internal. That boundary has dissolved. Talent decisions are now externally scrutinize by plaintiffs’ attorneys, regulators, independent bias auditors, skilled persons under regulatory review, state AI enforcement bodies, and boards asking succession questions they didn’t used to ask. The external scrutiny isn’t hypothetical. The Mobley collective could ultimately reach hundreds of millions of applicants. NYC Local Law 144 has been in enforcement for nearly three years. The EU AI Act’s requirements are active. Board succession inquiries are routine agenda items.

When an internal decision becomes an externally scrutinized decision, the infrastructure that supported it must change. Internal decisions require a system of record and documentation that the decision occurred. External scrutiny requires a system of evidence and documentation that the decision was made well. Those are different systems.

What Workforce Systems Are Being Asked to Become

HR systems were designed, across forty years of enterprise software history, to record decisions. They captured who was hired, who was promoted, who moved, who left. The system of record was the product. What a court, a regulator, and a board are now asking is something architecturally different. Not what was decided, but what evidence exists that the decision was made well. The system is being asked to carry the weight of proof, not just the weight of record.

Most workforce systems haven’t been redesigned for that job. They weren’t built with verified skill standards as a foundational layer. They weren’t built with governance workflows that produce an audit trail at the moment of decision. They weren’t built to be interrogated by a court, a regulator, or a board member with equal fluency. This is the shift underneath the three institutional inquiries. Mobley, NYC Local Law 144, the EU AI Act, the Boardspan succession findings and these aren’t four unrelated pressures. They’re four visible instances of the same underlying architectural change in what a workforce system is expected to produce.

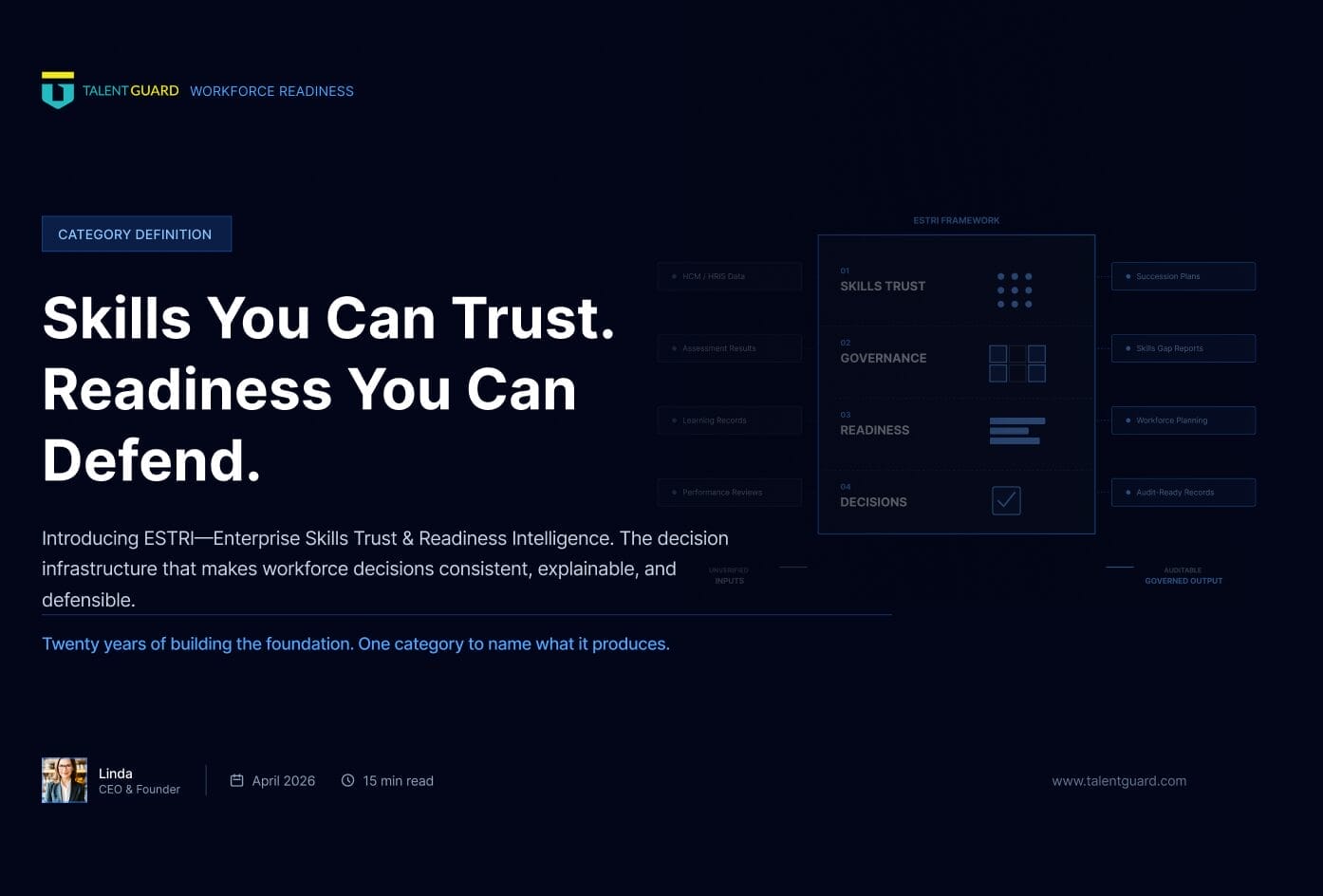

The category that change is producing has a name. Workforce intelligence systems are organized around four capabilities that systems of record were never built to deliver: governed skills data that decisions can be traced to, decision workflows that capture rationale at the point of decision, readiness assessed against verified standards, and a defensible evidence trail that can be reconstructed for any examiner.

At TalentGuard, we call this category Enterprise Skills Trust and Readiness Intelligence — ESTRI. It’s the architecture we believe workforce systems are becoming: not an HR tool upgraded with AI, but an intelligence layer built for the evidentiary expectations that courts, regulators, and boards are now converging on.

See a preview of TalentGuard’s platform

Skills You Can Trust. Readiness You Can Defend. Introducing ESTRI.

Twenty years of building the foundation. One category to name what it produces. CORE INSIGHT The gap was not visibility. Every organization had dashboards. The gap was trust. In my last post, I shared the story of how TalentGuard got here. The checkbox era. The career ladder with no data behind it. The deployments that […]

The Confidence that Precedes the Hardest Lessons

We went underground. We stayed there until we got it right. On twenty years of building the infrastructure the workforce actually needs and what the hardest lessons taught us about what it means to finish. There is a particular kind of confidence that precedes the hardest lessons. Not arrogance. Not carelessness. Just the reasonable certainty […]

Use Case #1: Job Architecture Refresh Without the Multi-Year Death March

Most job architecture refresh efforts do not fail because the work is too hard. They fail because the work is sequenced badly. The organization tries to clean up every title, calibrate every level, rewrite every job description, and standardize every skill at once. The result is predictable: endless workshops, political debates over exceptions, consulting-heavy inventories, […]